Dont give up you got this! I had luck with using vulkan in kobold.cpp as a substitute for rocm with my amd rx 580 card.

Dont give up you got this! I had luck with using vulkan in kobold.cpp as a substitute for rocm with my amd rx 580 card.

Your primary gaming desktop gpu will be best bet for running models. First check your card for exact information more vram the better. Nvidia is preferred but AMD cards work.

First you can play with llamafiles to just get started no fuss no muss download them and follow the quickstart to run as app.

Once you get it running learn the ropes a little and want some more like better performance or latest models then you can spend some time installing and running kobold.cpp with cublas for nvidia or vulcan for amd to offload layers onto the GPU.

If you have linux you can boot into CLI environment to save some vram.

Connect with program using your phone pi or other PC through local IP and open port.

In theory you can use all your devices in distributed interfacing like exo.

First you need to get a program that reads and runs the models. If you are an absolute newbie who doesn’t understand anything technical your best bet is llamafiles. They are extremely simple to run just download and follow the quickstart guide to start it like a application They recommend llava model you can choose from several prepackaged ones. I like mistral models.

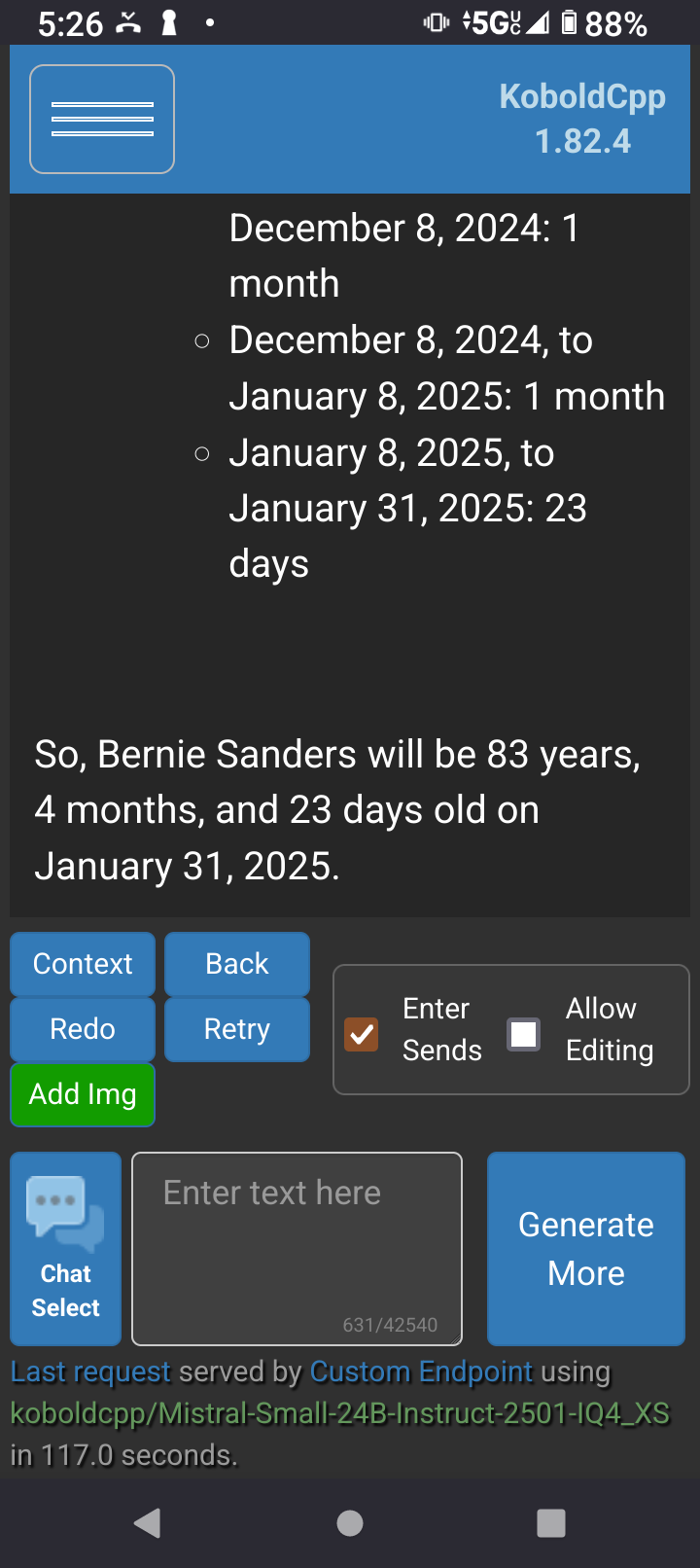

Then once you get into it and start wanting to run things more optimized and offloaded on a GPU you can spend a day trying to setup kobold.cpp.

They both start a local server you can point your phone or other computer on WiFi network to it with local ip address and port forward for access on phone data.

Local LLM gang represent! ✌️

Local LLMs aren’t perfect but are getting more usable. There’s abliterated models and uncensored fine tunes to choose from if you don’t like your LLM rejecting your questions.

To see if it can do it and how accurate its general knowledge is compared to the real data. A locally hosted LLM doesnt leak private data to the internet.

Most webpages and reddit post in search results are themselves full of LLM generated slop now. At this stage of the internet if your gonna consume slop one way or the other it might as well be on your own terms by self hosting an open weights open license LLM that can directly retrieve information from fact databases like wolframalpha, Wikipedia, world factbook, ect through RAG. Its never going to be perfect but its getting better every year.

Look being real they would get away with it no matter which decrepit old man was in office or what their politics are. America is a corporatocracy wearing the skin of democracy. When the IRS audited Microsoft for tax evasion, the IRS got sued and defunded through lobbying to the point of being forced to back off. Fucking Microsoft took down the IRS. The world has changed and our old institutions of power are waning.